The process of providing services related to the backend on an as-used basis is generally called serverless computing. The user does not have to worry about the underlying infrastructure and they can just write and deploy their code all thanks to the serverless provider. The customer who takes services of the backend only has to pay for the amount of computation. They need and not for any fixed amount of bandwidth or number of servers employed because the serverless computing service is auto-scaling. Despite this technology being called serverless, we should keep in mind that the physical servers do exist but the developers need not be aware of them, as it does not concern their work in any way.

THE NEED FOR SERVERLESS COMPUTING:

Back in the day, when a web application was built, the developer needed their physical hardware for running the server. This method was not only very expensive but also a cumbersome undertaking. Cloud computing was a boon to those who were going through similar problems. In cloud computing, developers and companies could rent out any fixed server space from a remote location. The developers or companies who purchased such server space often overbought them for any activity spike in their servers. So that they do not exceed their monthly limits and their applications can stay safe.

Due to such over-purchasing, much of the server space used to go to waste which is a tremendous loss in both resource and money. That is why the cloud service providers invented the auto-scaling models to give a solution to these kinds of issues. But even if there is auto-scaling, spikes in activities such as any DDoS attack can prove to be very fatal in monetary terms.

Serverless computing stands out as it helps developers to pay only for the services they use. This does not limit the amount of service nor does it force the developers to pay for any service that they did not use. This can be compared with a mobile phone’s internet plan where users would have to pay a fixed amount of money for a data plan. That is limited to a certain extent versus the data plan that only charges you for the number of bytes that you have used. Although it is called “serverless”, there exist real servers for these services. It is just that everything is on the service provider and the customer can focus more on their work without having to worry about the servers.

WHAT ARE THE ADVANTAGES OF SERVERLESS COMPUTING?

Cost-Effectiveness: Serverless computing is generally a much more cost-efficient option than traditional cloud computing services. In traditional backend cloud services, the users generally end up paying for even the unused server space and computing time, whereas, in serverless computing, one only has to pay for what he uses.

Easy Scaling: Unlike the traditional backend cloud services, the developers generally do not have to worry about the policies if they want to scale up their code. In the case of serverless computing, it is all taken care of by the vendors of the backend service.

Simple Backend Code: Serverless computing makes use of FaaS (Function as a Service) that can be used by the developers for creating simple functions that would perform simple functions independently.

Quick Turnaround: The architecture involved in Serverless computing is very effective in cutting the time to market a product. The developers do not need to worry about the bugs and adding new features and they have the option to add and modify the code on a piecemeal basis.

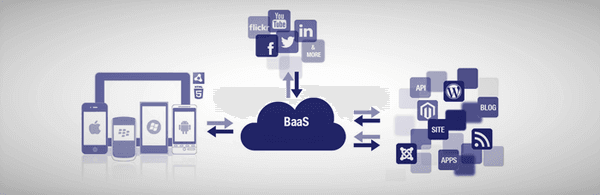

Database and storage services are what most serverless computing vendors offer to their customers. Many of them also have the FaaS platforms such as Cloudflare Workers. FaaS enables the developers in executing city codes on the network edge. With the FaaS technology, the developers would be able to make a codebase that is much more of a scalable type. They also do not have to worry about spending any resources on the maintenance of the back end that lies beneath.

THE FUTURE OF SERVERLESS COMPUTING:

Serverless computing is evolving every day and the service providers for serverless computing try to come up with solutions that will overcome the small number of drawbacks that the serverless computing architecture has. One such drawback is the cold starts. Cold start is referred to as the latency that is experienced due to hosting a function from fresh. When a function is not being used for a while, the vendor usually closes that function to prevent over-provisioning and to save energy.

The next time the function is called by a user, the function has to be started again fresh which causes a lot of latency resulting in a cold start. Cold starts are a common problem in serverless computing. Once the function starts running and is called, again and again, it will experience warm starts but if the function goes dormant again, there will be the occurrence of a cold start again.

It was recently that a solution to this trade-off was found and now the serverless functions can be spun up in advance to completely avoid any sort of cold starts. This results in a platform governed by FaaS that has no cold starts. As more and more drawbacks like this start to get addressed, serverless computing will continue gaining popularity, and more and more companies and developers will start to adopt better and cost-effective serverless computing instead of traditional cloud computing.